I learned about AI harnesses and you should too

In a live stream with the guys from Altimate AI, we talked about something that was new to me:

AI harnesses.

And the more I think about it, the more I find it a really interesting topic.

Watch the live stream with Altimate here:

You might have actually seen this already without calling it that.

For example with OpenClaw. A lot of people looked at it and thought, okay, this is a cool setup around an LLM. But that is basically what a harness is. A structured way to control how the model operates, which tools it uses, and what it is allowed to do.

People are talking a lot about models, prompt engineering, context windows and all that. And yes, these things matter. But I think there is another topic that is much more important than most people realize right now, especially for data engineering.

That topic is AI harnesses.

And I think this is where a lot of people are still looking at the problem the wrong way.

It is not always the model

When an AI system fails, the first reaction is often: the model is not good enough. We need a better model. We need the newest model. We need more reasoning power.

Sometimes that is true.

But very often, especially in data work, that is not the real issue.

The real issue is that the system has no good way to operate inside the environment. It has no proper structure around the model. It does not know how to move through the task in a controlled way. And that is exactly where the harness comes in.

What a harness actually is

So what is a harness?

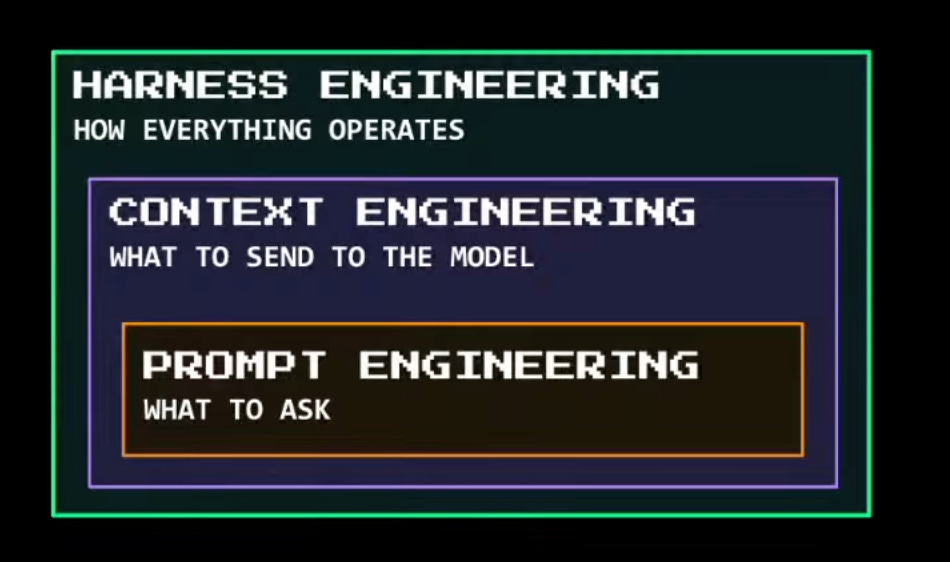

For me, the easiest way to think about it is this: the harness is the layer around the model that defines how the model actually works in practice.

It decides which tools are available.

It decides which workflows should be followed.

It decides what the system is allowed to do and what it must never do.

It decides where guardrails exist and how they are enforced.

It decides what information gets pulled in and how that information is used.

That is a big deal.

This is not just coding

Engineering work is not just writing code.

A lot of the work is understanding what is going on, figuring out what is broken, and working through messy situations where the answer is not obvious.

That is exactly where AI systems struggle today.

They can generate something that looks correct. But they often miss the deeper context, hallucinate details that are not actually there, or take shortcuts that would never be acceptable in a real environment.

Without a proper harness, the agent is basically improvising in a complex system.

The real problem in data work

Because data work is not happening in one place.

The repo is only one part of the story. The real truth is spread all over the place.

It is in the warehouse, the schemas, and the actual data values.

It is in lineage, orchestration, and dashboards.

It is in business definitions and naming conventions.

It is in all the weird exceptions that companies have built up over time.

That means an agent can look very smart on the surface and still fail badly.

It can generate a report that looks polished, produce SQL that seems reasonable, and sound confident.

And then you look closer and notice it missed models, invented columns, misunderstood risk, or gave you something that would never pass in a real team.

That is why I think the conversation has to move beyond “which model is best?”

A better question is: what kind of harness is this model operating inside?

Because the harness is what turns raw model capability into usable engineering behavior.

Context alone is not enough

A good harness brings in the right context, but it does more than that. It also provides tools and skills.

For example, if the system needs lineage information, schema metadata or warehouse inspection, the harness should give it exact ways to get that information. If the system needs to create or review something, the harness should help it follow a known process instead of improvising.

That is also where guardrails become real.

And this part is super important.

A lot of people still think guardrails mean putting a sentence in the prompt like “do not expose PII” or “do not touch production.” But that is weak. That is a suggestion. It might work for a while, until the conversation gets longer, the context shifts, or the system goes off track.

Real guardrails should live outside the model. For example:

a system should be technically unable to write in analysis mode

a PII check should be enforced before data leaves a boundary

unsafe actions should require a different mode or a review step

That is what gives teams trust.

Trust is the real bottleneck

And trust is the real bottleneck right now.

Most engineers are not rejecting AI because they hate automation. They reject it because they do not trust a system that can improvise freely inside a sensitive environment.

Honestly, I think that is fair.

The problem with vendor-shaped agents

The other thing that matters is independence.

A lot of vendor-built agents will naturally push you deeper into their own ecosystem. Again, that makes sense from their side. But from the user side, it can become a problem. Because now the harness is no longer only about helping you solve engineering tasks. It is also nudging you toward one stack, one platform, one way of doing things.

That may not be the best solution for your setup.

And since most companies have mixed environments anyway, this gets limiting very fast.

Why this will matter more

That is why I think this topic will become much more important.

Not because it sounds fancy, but because this is where the real control is.

Models will keep improving. That part is obvious.

But if you want AI to work like a serious engineering assistant, then the model alone is not enough.

You need the environment around it to be right.

That is the harness.

***

Ready to become a Data Engineer? Then join my Learn Data Engineering Academy today!

If you want to build real platforms, master the full stack, and close your skill gaps, check out my Data Engineer Coaching program.

If you are interested, but still have a few burning questions on your mind: feel free to contact me via hello@learndataengineering.com.

For more information and content on Data Engineering, also check out my other blog posts, videos and more on Medium, YouTube and LinkedIn!